1.Introduction

Artificial intelligence (AI) applications are rapidly becoming embedded in and entangled with our lives. In nursing, various forms of AI have become standard tools in practice, and nursing regulators are starting to understand that this may require a new set of skills and competencies to ensure that health care providers are able to safely, ethically, and responsibly use these tools.[1,2] Nursing practice is underpinned with strong regulatory requirements and the introduction of AI in healthcare means that nursing professional bodies and higher education institutions both have a vested interest in ensuring that AI related interventions must have a positive and meaningful impact on patient care. This paper explores important considerations that nursing educators must reflect on when using AI and in particular, the unique conditions that they are expected to operate within.

The paper will firstly analyse the current and emerging policy contexts of how AI is being constructed within healthcare and in particular what is emanating internationally within nursing education. While immense opportunities exist with the introduction of AI in healthcare, this paper will then address specific risks associated with the technology and present as an example, the nurse’s role of patient care planning and decision making and using AI. The paper then poses a number of questions around the introduction of AI in healthcare, in particular: how AI generates outputs; the nature of what it generates and finally the implication of AI on the profession and wider society. On the latter, ‘four D’ impacts are discussed including: Deskilling; Dehumanising; Dishonesty and finally Differential access.

The paper then presents ‘21st Century AI attributes’ that nurses should be skilled on and this is followed with a curriculum focused discussion that presents mixed research results on the impact AI has on teaching and learning in nursing education. Critical thinking, a key skill required in nursing practice, is discussed in conjunction with the risks associated with the introduction of AI in nursing education; over reliance in the use of AI by educator and student alike is a possibility with increased dependency leading to diminished ability to solve problems independently. Two positive examples are then introduced, where AI can make a significant contribution to nursing education; in simulation laboratories where students can be exposed to a multitude of dynamic and diverse clinical scenarios, and secondly as a personal tutor.

Ethical considerations pertaining to the introduction of AI in nursing education are discussed as well as reevaluating how assessment of student learning must now be considered in light of the implosion of generated content that may be consumed uncritically by students. Finally, the paper will introduce a promising curricular framework, ‘Constraints Led Approach’ (CLA); the theoretical and applied foundations of which are in sport and skill acquisition but has potential to be used in the inclusion of AI in nurse education.

2.Methodology

Given the rapid surge in attention on generative AI and the concomitant exponential growth in related academic (and non-academic) publications in the field, adopting a flexible and iterative approach was essential to guide the writing process effectively. Consequently, the authors adopted a non-systematic or non-Cochrane[3] narrative review approach to structure the selection and synthesis of the current literature on the topic. A non-systematic narrative literature review, variously described as simply a literature review or narrative review is often justified when the aim is to provide a broad and comprehensive synthesis of available literature on a topic, especially when flexibility is required due to the nature of the subject.[4,5]

Particularly for topics that are under-researched, new or rapidly evolving, a non-systematic review narrative approach can be very useful.[6] This approach “can also help to provide an overview of areas in which the research is disparate and interdisciplinary”[7] (p.333). Unlike systematic reviews, which follow strict protocols for study inclusion and data synthesis, non-systematic narrative reviews allow for a more nuanced and flexible approach. This approach enables the reviewer to survey a wide range of outputs, including (but not necessarily limited to) theoretical discussions, grey literature, opinion pieces, empirical research, policy documents and case studies .[4] While some of these sources may not meet the strict inclusion and exclusion criteria of systematic reviews, they are nonetheless central for understanding the development of a field, particularly so for emerging fields such as Generative AI and how it is being applied to health care education. It has become a matter of course that policymakers, institutional leaders and regulatory bodies increasingly seek to ensure that their decisions are evidence-based, or at the very least, least evidence-informed. The narrative approach can provide a pragmatic approach to literature reviews that can inform policy in rapidly evolving fields.

To ensure a comprehensive review, the literature search strategy involved multiple academic databases, including, Wiley, EBSCO, Taylor and Francis, Health Research Premium (PROQUEST) and Google Scholar. These databases were selected for their wide coverage of disciplines and developments relevant to the topic. Search terms were carefully chosen to reflect the primary focus of the review and included keywords and phrases such as “Artificial Intelligence”, “education”, “teaching and learning” “nursing” and “Health Care Professionals (this short list is intended to be illustrative and not exhaustive). Boolean operators were used to combine these terms and ensure a balance between comprehensiveness and specificity. The search was limited to studies published between 2022 and 2024 to capture recent developments in the field. While acknowledging the inherent limitations of an Anglo-centric approach; given the language capabilities of the authors, only papers published in English were included. While database searches provided many of the sources used in this paper, a snowball search strategy[8] was also applied. This involved identifying additional references and citations drawn from the core articles, thus expanding the breadth of relevant literature.

The synthesis process focused on identifying and analysing patterns and themes within the collected literature. By employing a narrative synthesis approach[9] this review was able to accommodate the variability in study designs and literature sources, providing a comprehensive interpretation that highlighted areas of convergence, divergence, and consequently identification of aspects that could further inform this paper. This methodology was particularly appropriate given the diverse nature of the sources included, enabling the review to draw meaningful conclusions that extend beyond the limitations of individual studies .[7]

Although the narrative review approach allows for a broad and flexible exploration of the literature, it is not without limitations. The potential for selection and interpretative biases exists due to the more subjective nature of narrative synthesis compared to systematic reviews. To mitigate these biases, transparent documentation of the search and selection processes was maintained, enhancing the transparency of the review. However, the interpretative component inherent to this approach means that some subjectivity is unavoidable .[10]

3.Policy context

As society grapples with what the rapid development and deployment of artificial intelligence means for education, healthcare, and labour, frameworks are emerging that attempt to guide practice towards ethical, safe, and responsible use of AI within professional contexts. There is growing recognition that rigid policy approaches may not be fit for purpose with a technology as invasive, pervasive, and rapidly changing as AI. There are a number of foundational frameworks that have been developed to guide the ethical and responsible development and use of AI over the last two decades (e.g. the Asilomar AI Principles ,[11] the Montreal Declaration on Responsible AI,[12] and the European Commission’s Ethics Guidelines for Trustworthy Artificial Intelligence,[13] but every field needs to adapt those frameworks to their professional context, identifying the specific boundaries needed to safeguard their own practice and values. The World Health Organisation (WHO) is strongly advocating for AI as part of the digital future of healthcare globally, attempting to nurture an AI ecosystem that considers safety, equity and sustainability. While acknowledging that AI does present potential challenges to patients, health professionals and health providers, the WHO has stated its “vision is to foster digital frontiers and nurture an AI ecosystem for safety, equity, and the advancement of the Sustainable Development Goals, contributing to a healthier world.”[2]

In the case of nursing and allied health professionals, some regulatory and representative bodies have started releasing their own guidance and position statements on what these technologies mean for their professions. The Canadian Association of Schools of Nursing released a webinar in May 2024 on embracing ChatGPT in nursing education and practice.[1] The association encouraged nursing educators to consider the practical applications of generative AI in classroom teaching, clinical education, simulation, and lab environments, as well as its emerging role in nursing practice. The Australian College of Nursing[14] has suggested several applications of AI in nursing practice, including Clinical Decision Support Systems providing evidence-based recommendations, predictive analytics tools forecasting patient outcomes, and telehealth platforms enabling remote patient monitoring. These technologies can enhance early identification of health issues, support clinical decision-making, and extend care to patients in remote areas. However, the ACN is not inured to the challenges of AI use, including some of the following issues: accuracy and reliability; bias and fairness; ethical and legal issues and the problems of integration with existing systems.

In the US, the National Academy of Medicine’s[15] AI Code of Conduct (CICC) Principles emphasises the importance of developing and using AI in health and medicine in a way that is safe, effective, equitable, efficient, accessible, transparent, accountable, secure, and adaptive. These principles are intended to ensure that AI applications prioritise people’s needs, prevent harm, improve health outcomes, and maintain fairness and security. However, notwithstanding the technological advances, opportunities and challenges that 21st century nurses face, there remain certain immutable realities for the nursing profession. As the American Nurses Association’s[16] position statement articulates: “Integration of AI in practice must not alter the goals of patient care. Compassion, trust, and caring are foundational principles in the nurse-patient relationship” (p. 5). Therefore, as AI reshapes healthcare, the nursing profession’s ongoing challenge will be to skillfully and ethically utilise AI advancements without losing sight of the fundamental human elements of caring.

Ireland’s department of health’s Report of the Expert Review Body on Nursing and Midwifery (2024) acknowledges that “the area of digital health practice will continue to grow exponentially over the next ten years, with expected innovations in AI to support clinical decision making [and] machine learning from a range of health data sources” (p.76). The Nursing and Midwifery Board of Ireland’s (NMBI) ‘Digital Health Competency Standards and Requirements’ published in 2023 does not specifically reference AI.[17] However, while it did not mention AI; recognising the dynamic nature of the current landscape the document stated, “this is not an exhaustive list as digital solutions will evolve rapidly over time as will the potential learning opportunities for students”.[17] The NMBI’s ‘Code of Professional Conduct and Ethics for Registered Nurses and Registered Midwives’[18] introduces AI and the following caution is advised in relation to its use: ‘Practitioners should be cautious about the interpretation of AI recommendations, as they must retain assessment and judgment, and ultimate accountability for their actions and omissions’.[18]

In the UK, the Royal College of Nursing (RCN) occupies a unique position, acting as both a trade union and a professional body. This dual role allows it to support nurses’ professional development while also representing their employment interests. As such, the RCN is well positioned to identify both the opportunities and potential threats that artificial intelligence presents to the nursing profession. For example, while acknowledging that there are many forms of AI and AI tools available, the RCN has proposed that AI can “help with career planning, career exploration, career research, or even interview preparation”.[19]

The policy landscape surrounding AI in healthcare and nursing is rapidly evolving. While frameworks such as the WHO’s and NAM’s guide ethical AI use in practice, the dynamic nature of the technology means rigid long-term policies may have limited applicability within a relatively short time. Consequently, nursing and allied health bodies globally are issuing their own guidance, acknowledging AI’s potential for clinical support and education. Crucially, they emphasise that technology must not undermine core nursing principles like compassion, trust, and human accountability. Nurses are challenged to skillfully utilise AI, while maintaining ethical oversight and addressing issues like bias and accuracy within this fluid context. Integration of AI in practice must not alter the goals of patient care. Compassion, trust, and caring are foundational principles in the nurse-patient relationship.

4.Juxtaposed positionality to AI in nursing education

Nursing, along with many other health professions have both a higher educational and regulatory context to the introduction of AI within their fields. Since the emergence of artificial intelligence including for example the most recent iterations of Chat GPT-4, responses from professional bodies, academics and related leaders in nursing can best be described as situated within a juxtaposition from lukewarm acceptance to a full approval and genuine embrace of the technology. This is best captured by Le Lagadec, Jackson and Cleary ,[20] ‘Like any change, AI has had a mixed response in nurse education, with some embracing the advent of AI as nothing more than a helpful tool, and others having reservations about the authenticity and academic integrity of AI- generated use and outputs’. (p.3883)

A closer look at AI positionalities, and particularly those situated within a lukewarm disposition on the technology suggests a particular nervousness and concern towards the introduction of AI within healthcare. For example, Abdulai and Hung[21] note that: ‘the reductionist approaches of ChatGPT may be overly simplistic and may not be capable of analysing or understanding clients that present with complex health challenges or tailoring responses to individual cases’ (p. 2). In addition, Woodnut et al.[22] argue that: “we are at a critical junction with AI, and careful incorporation of it with appropriate governance could improve care outcomes but erroneous use could cause significant harms” (p.85) Clearly there are legitimate concerns that require further discussion and analysis as contrary views may ultimately be well founded .[23]

Several areas of discussion are emerging within the literature outlining specific problems and difficulties with AI in nursing. These are effectively outlined by O’Connor (2023) in a recent paper that identifies both opportunities and threats for nursing students using AI. The opportunities focus specifically on the potential for enhanced student learning capacity, while the threats centre specifically on five key areas including: (1) Data privacy/security and copyright; (2) Digital inequality; (3) AI discrimination against non-native-English students; (4) Dehumanised patient care; and (5) Job losses secondary to AI technology.[24] Furthermore, in a recent systematic review that investigated the utility of ChatGPT in healthcare education research and practice, Sallam[25] identified thirteen key and emerging risks/concerns. Table 1 contrasts some of these risks and their application to nursing and patient care planning/clinical decision making.

| Ethical issues (e.g., risk of bias, discrimination based on the quality of training data, plagiarism) | Personal patient data uploaded to AI Patient care interventions that are biased and discriminatory and perpetuate same. AI outputs don’t acknowledge the original data source. |

| Hallucination (the generation of scientifically incorrect content that sounds plausible) | Treatments that are dangerous and a risk to patients. Patient safety compromised by inaccurate information or analysis by AI Misinformation leading to learning gaps |

| Transparency issues (black box application) | Treatment interventions that cannot be validated or verified. |

| Risk of declining need for human expertise with subsequent psychologic, economic and social issues | Poor oversight in diminished healthcare environments leading to patient safety issues. |

| Concerns about data privacy for medical information | Patient data including sensitive information uploaded to public AI leading to ethical and professional dilemmas |

| Risk of declining clinical skills, critical thinking and problem-solving abilities | AI replacing nurses and other health care professionals AI leading to a decline in patient care planning capacity and quality and drift towards generic artificial norms. |

| Legal issues (e.g., copyright issues, authorship status) | Original sources relating to the care planning and subsequent clinical decision making not acknowledged |

| Unlimited adoption of artificial intelligence | Diminished criticality and evaluation by nursing personnel who are over reliant on AI |

The analysis of the risks identified by both O’Connor et al.[24] and Sallam[25] can be reduced further to three specific questions or areas of contention: (1) How AI generates outputs, (2) The nature of what AI generates, and finally (3) The implications of AI on society.

4.1How are AI outputs explained?

The ‘how’ question around AI and related data outputs centres specifically on the unexplained ‘black box’ nature of AI-generated content[26] which Collins[27] argues leads to a general lack of understanding of how AI works (p.1). Pavlidis[28] refers to the importance of the ‘imperative of explainability’ of AI, and with this has emerged the concept of ‘Explainable Artificial Intelligence (XAI)’, which is an attempt to illuminate and describe ‘under the bonnet’ analysis steps and outputs that emerge following AI prompting. XAI offers significant potential in providing transparency in decision making, may minimise medical errors, improve detection of diseases and finally, has the potential to uncover underpinning biases in AI outputs.[29] Fairness and equity are therefore enhanced with XAI as health delivery processes can be understood better and biases from outputs can be scrutinized.[29]

Alderden et al.[30] for example devised an AI assisted Hospital-acquired Pressure Injuries (HAPI) risk assessment model that contained an ‘explainable Artificial Intelligence dashboard’ that supported the nursing professionals in their decision making. Wynn[31] however offers a number of unique insights drawn from ethical discourses as to how ‘black box’ AI outputs otherwise described as Non-Explainable AI (NXAI) can be adopted responsibly within nursing practice: ‘rather than full explainability, the focus shifts to ensuring that NXAI aligns with patient values, clinical goals, and nursing responsibilities, ultimately supporting patient-centred care’ (p. 297). A number of examples are presented by Wynn[31] where there are unambiguous merits in using AI outputs that lack underpinning reasoning but instead presents ‘trends, emerging risks, or inefficiencies that nurses might otherwise overlook in daily practice’ (p. 296).

4.2AI generated content may be biased and discriminatory

As in other fields, the use of AI in health care may lead to biased, discriminatory, or incorrect information being provided to health care professionals to inform their clinical decisions, which may have unintended or dangerous outcomes. For example, Byrne et al [32] concludes that: ‘The generation of AI images may contribute to difficulties in recruiting nurses to aged care facilities due to deeply embedded ideologies replicated in images, influencing the perception of the nursing discipline and potential nursing recruits’ (p.7). In addition, a number of ethical concerns associated with AI centre around patient care, including upholding privacy, the provision of informed consent, and the storage and security of their medical records.[33] For example, patient data that is uploaded to AI platforms may have a lack of controls in relation to privacy.[34] Hallucinations, infodemic outputs, academic fraud, the absence of references, copyright challenges all come under the umbrella of output that can seriously undermine AI as a source of accurate and reliable knowledge. Such concerns can impact on whether AI can be fully embraced within nurse education.[35]

4.3What are the potential impacts of AI?

The ‘impact’ question by its nature draws significant attention in the literature and while AI offers tremendous promise and potential to the nursing profession, negative societal impacts continue to be discussed both within the literature as well as in other fora. These are best captured as the four D’s: Deskilling, Dehumanising, Dishonesty, and Differential access.

4.3.1AI associated deskilling (AID)

AI automation potentially carries unintended consequences associated with student nurse learning, especially when considering the beginning of their professional educational journey. Nilsen[36] for example, concludes that: ‘nurses traditionally develop their skills through a progression from basic to advanced tasks, with fundamental skills laying the groundwork for expertise. However, AI driven task automation might skip this foundational learning stage, potentially leading to deskilling’ (p. 4).

The potential deskilling of qualified nurses by ubiquitous access to AI, stems from two related concerns. Firstly, a potential outcome of AI related automation may be deskilling in healthcare professionals’ expertise[36,37] and secondly, with the ongoing and pervasive introduction of AI across so many domains of practice, without realistic and immediate continuous professional development training, there is a risk that healthcare staff could become deskilled in relation to AI associated application in healthcare. The is best captured by the WHO[38] concluding that ‘AI …could reduce the size of the workforce, limit, challenge or degrade the skills of health workers, and oblige them to retrain to adapt to the use of AI” (p. 2). Nurses must therefore have a holistic knowledge of how AI and patient care intertwine[39] and new skills will evolve and grow because of the ongoing evolution in the technology.[40] Those skills will need to be recognised in professional competency frameworks.

4.3.2Dehumanised healthcare delivery

“It is not a human but is designed to be able to communicate and interact with people in a way that is similar to how a person would”[41](p.2).

AI robotics will replace humans in specific tasks[42] and more pointedly ‘are now being programmed by humans to act human-like to simulate the human nurse to attend to patients’ holistic care needs’[43] (p. 2). Will AI therefore lead to diminished human nurse-patient contact and to dehumanised nursing practice as a result? In addition, and as outlined earlier, concerns also remain as to whether AI interventions will ultimately decrease the emotional intelligence of nurses and dehumanise their social skills.[24]

Replacing at least some human activity with AI alternatives seems almost inevitable in healthcare delivery, as in other fields. However, what is still uncertain is the threshold where AI will be accepted or included within a nursing care context. Concerns for example remain in relation to whether AI outputs will provide accurate and safe guidance to nursing staff in various healthcare contexts. For example, Woodnutt et al.[22] explored whether Chat GPT (in 2023) could devise a suitable mental health nursing care plan for a patient and concluded that the outputs contained ‘significant errors’ (p. 79) which would have led to patient harm. In addition, constructed stereotypes emerged in the care plan, including reference to mental ill health and self-injury. Furthermore, ‘…ChatGPT made an assessment of Emily as someone who uses substances despite this not being in the input data’[22] (p. 85). AI programs are however evolving with tremendous pace[44,45] and more recent Chat GPT iterations will have improved outputs. AI content therefore is determined by the quality of available data that it has at its disposal[21,46] and future AI outputs have the potential to improve and demonstrate possibly greater applicability and validity.

While AI appears to have the potential to replace humans in healthcare delivery, this is disputed by many who argue that AI should instead be seen as a ‘powerful ally that can enhance the care nurses provide’[39] (p. 108). Human oversight and decision-making by nursing professionals remains critical to safe practice at this point in time,[40] and the inclusion of AI should occur in conjunction with and not instead of nurses professional expertise.[47,48]

4.3.3Dishonesty

The risk of cheating and dishonest practices by students who covertly use AI to complete assessments is a significant discussion point in Higher Education[49] and particularly for educators who are charged with the task of ensuring academic integrity. This is indeed a “new world”[50] for both student and educator alike and as highlighted by the National Academic Integrity Network,[51] with any technology ‘if they are used to bypass rather than support thinking, or used to acquire academic credit which has not been earned via real, intellectual engagement with the subject of study, then they can undermine the educational enterprise’ (p. 15). How assessment of student learning is formulated therefore now presents much greater challenges to educators[52,53] and there is an urgent need at departmental or faculty level to revise all forms of assessment to ensure that they are ‘fit for purpose’.[54]

Addressing assessment strategies within programmes will only partially address the AI conundrum particularly given as O’Connor et al.[55] describes how ‘educators and students at schools and universities have been conflicted about the use of generative AI tools’ (p. 2). An emerging values discourse is now being advocated within higher education settings that is coinciding with the introduction of AI technologies, promoting an ethos centring on ‘integrity, trust, and truthfulness as being at the heart of learning, knowledge discovery and creativity’[51] (p. 9). In addition, the core values that underlie health care professionals’ competency to practice must remain at the fore in guiding and helping to assist in their navigation and usage of AI technologies.[20,46]

4.3.4Differential access to AI

Yasin et al. ,[56] in a recent scoping review highlights that equitable access remains a challenge to the introduction of AI in nursing research. The introduction of AI brings with it specific challenges around equality of access or digital inequity[24] both at a global level for citizens in countries with limited access ,[54] as well as locally for students in educational institutions who cannot afford to purchase a monthly subscription to premium AI services.[49] Differential AI access for students[51] therefore places a responsibility on educational bodies to ensure that functional and safe AI is made available to all students, including both the technology itself[49] and the learning opportunities that it facilitates thereafter.[34]

While AI has the potential to transform healthcare in many ways including in diagnostics/prevention, pharmacology, and across diverse treatment areas,[57] political blocking of access to frontier AI models in thirty countries[54] or the ‘inability to follow an AI’s suggested diet due to costs’[58] (p. 1) emphasise the wider societal inequalities that are at play amongst potential users.

The introduction of AI therefore has brought with it more questions than answers. Concerns such as those outlined here under the ‘four D impact’ as well as others, can be further categorised into several themes and related questions that are now emerging in many healthcare disciplines, and they will form the basis for future discussion and analysis in the years to come (see Table 2). A potential AI vacuum in AI competency and literacy exists amongst nurse educators and the quicker answers are found to the many questions that have emerged, the greater the opportunity to resolve the juxtaposed stance that currently prevails around embracing AI.

| Patient Safety | • How can regulatory professional bodies ensure that with the introduction of AI in diagnostic, treatment and drug therapies contexts that patients’ lives and welfare are protected? |

| Patient Care Elements | • Will healthcare staff accept and stand by future AI generated care plans that have 100% validity but lack a clear underpinning rationale or logic? • How can interpersonal communication including empathy compassion and care be maintained within the context of AI inclusion in health care? • How can wholistic care and treatment be delivered in tandem with AI interventions? |

| Automation, decision making and accountability in healthcare | • In relation to automation and accountability for decision-making in-patient care – who makes the final treatment decision? • What underpinning data is the decision based on? • What logic or problem solving preceded the recommendation – ‘Black box’? |

| Diminished clinical decision making | • Can AI lead to diminished clinical decision-making skills amongst health care professionals? • Can future decisions in relation to clinical practice be completed without human interface? |

| The nature of the knowledge emerging from AI | • How can one be assured those outputs emerging from AI are valid and do not contain bias, fraudulent research, hallucinations etc? • How can infodemic and misinformation be managed and minimised? |

| Equality and inclusion and diversity and AI | • Will digital discrimination lead to diminished access within global health care? |

| Ethical | • How can patients’ data and privacy be protected from inappropriate/commercial uses? • Who has copyright and authorship status with respect to training data? |

| Academic dishonesty | • How can chatbots avoid accusations of plagiarism when the model’s training data includes copyright sources? • How can students be supported to use technology upholding academic and professional values specifically in relation to Artificial Intelligence? • How will AI co-creation change the concept of academic honesty in educational and research contexts? |

| Social and Environmental | • Is data storage sustainable and environmentally friendly? • Will model training and production continue to become more efficient and push down the environmental cost of widespread use of AI? • Will current and future AI developments lead to job losses? |

5.Curricular areas of focus in healthcare

5.1Twenty-first Century graduate attributes of nurses in the era of AI

One of the outcomes of the rapid development and uptake of AI is that it is forcing us to re-evaluate our understanding of the skills and competencies that graduates from our programmes will need in this part of the 21st Century. Much has been written about the need to develop so-called 21st Century Skills in learners, which usually refers to various combinations of digital and information literacy, an entrepreneurial mindset, cultural competency, agility, risk tolerance and so on.[59] The current healthcare landscape requires professionals equipped with skills that extend beyond traditional clinical competencies, with digital and now AI literacy emerging as new dimensions of effective practice. Digital literacy has evolved beyond technical proficiency in using computers for everyday tasks, to critical engagement with various technologies and data sources, including AI.[60] With technology becoming deeply embedded in every sector, there is an urgent need for training programmes to prepare and continually update professionals who can not only use the tools functionally, but also have a critical, even if basic, understanding of how these systems work, and the in-built biases and limitations that all technology has.

Recently, there have been a plethora of AI literacy/competency frameworks developed, which attempt to provide guidance on the set of skills and competencies that are necessary to safely and responsibly interact with AI in work, learning, and everyday life.[49,61, 62, 63, 64, 65] These invariably include some variation of considerations for how to evaluate AI outputs, equity and ethical considerations, data privacy, environmental considerations, informed use, transparency, and human oversight and accountability. AI literacy encompasses the ability to critically evaluate AI-generated information, recognize potential biases in algorithmic outputs, and make informed decisions about when and how to incorporate AI-generated recommendations into clinical practice. The focus is on developing transversal and transferrable skills, rather than proficiency with individual systems that rapidly become outdated. AI literacies will allow graduates to effectively collaborate with these systems while maintaining human oversight and accountability.

Nowhere is this more evident than in health care, where the intersection of AI literacy and clinical reasoning and decision-making can be literally a life and death scenario. Recognising when to trust an AI suggestion and when and how to verify outputs is an extension of information and digital literacies, but requires a nuanced set of skills and attitudes, including understanding the risks within one’s professional practice. Cultivating these skills requires intentional educational interventions and curriculum design that expose students to AI in a safe, low-stakes environment where they can practice those skills (just as they do for clinical skills).

Exposure and access to technology alone will not develop the necessary skills, however; they need to be scaffolded throughout the programme and evaluated just as we evaluate clinical and other skills in both simulated and authentic performance environments.[66,67] The ability to contextualise AI recommendations within one’s broader clinical knowledge and context of the individual clinical scenario is becoming a critical part of a healthcare professional’s competence. These AI competencies are additional and complementary skills to the existing suite of domain skills that graduates must develop, and professional bodies will need to rapidly adapt their guidance on the competencies that graduates need to be able to practice in their professional contexts.[14,68]

5.2AI learning moments for healthcare students

The potential that AI brings to healthcare is immense and the possibilities appear endless through a nursing lens;[46] for example, with a few keyboard clicks, large amounts of data can be analysed, and accurate predictions of treatment outcomes can be identified.[69] There are currently two key parallel themes emerging in the nursing education literature with respect to AI. The first centres on theoretical possibilities and effective means of introducing AI in educational contexts, while the second is based on primary research studies (randomised control trials) and secondary research such as systematic reviews that have explored the impact or effectiveness of AI in specific healthcare educational contexts.

In relation to the former, O’Connor et al.,[55] offers the following effective example by which AI can be used in nursing education: ‘a chatbot could be asked to explain up-to date social, cultural or political issues affecting patients and healthcare in different regions and countries. The AI-generated output could be cross-checked by students to determine its accuracy’ (p. 3). AI can also be used to quickly generate multiple case studies at various levels for students to work with, meaning that instructors can provide more varied and nuanced learning opportunities than would be possible developing each from scratch.[70] Furthermore, O’Connor et al.,[55] also offers examples whereby students can enhance their patient education skills by devising bespoke individualised intervention packages through AI for example in patient lifestyle changes. Skills associated with AI usage by students, include for example, developing effective prompting strategies[49] on a ‘trial and error’ basis[71] and reinforcing the process as well as the product or output that emerges from the activity.

Even though AI is still relatively new in many disciplines, a recent systematic review by Crompton and Burke[72] concluded that research in higher education in the form of randomised control trials (RCT) that examine impact or effectiveness of AI are growing rapidly. The studies were categorised into five key uses including: (1) Student assessment; (2) Predicting and identifying students at risk of not continuing their studies; (3) Supporting students using AI; (4) Intelligent tutoring systems, and (5) Managing student learning.[72] Studies that have examined impact or effectiveness of AI in nursing education have shown mixed results.

For example, a recently completed RCT by Akutay et al.,[73] concluded that the introduction of AI pedagogy enhanced student nurses’ case management performance and equaled instructor led inputs around overall satisfaction associated with the delivery of the content. However, Yahagi et al.[74] examined the impact of an AI chatbot compared with conventional anaesthesia nurse education on pre-operative patient anxiety and found no significant differences in either approach. They concluded that there was a need to revise chatbot algorithms to improve their performance and that their introduction should ‘complement not replace human healthcare providers’ (p. 767). In a recent scoping review examining the impact of AI on nurse education, Lifshits and Rosenberg[75] expound its significant potential while equally demonstrating relative caution owing to weaknesses similar to those outlined earlier.

Finally, Ma et al.[76] completed a robust systematic review on the role of AI in shaping nurse education and while it has shown to have a positive influence on student learning, challenges remain specific to higher order learning such as in specific scenario level communication skills as well as in clinical decision making. Ma et al.[76] proposed research recommendations that aim to ‘optimize the emotional interaction and cognitive support functions of AI tools’ as well as ‘exploring the organic combination of AI and traditional teaching methods to achieve more comprehensive clinical ability training’ (p. 11). These are timely research outcomes given as Tornero-Costa et al.[77] argue, that in the area of mental health care, there has been an ’overly accelerated promotion of new AI models without pausing to assess their real-world viability’. (p. 11)

5.3Critical thinkers and growth in AI

A key objective of education is to prepare students to become critical thinkers[78] and the emergence of AI has focused the minds of educators around how higher order skills that underpin decision making in clinical practice can be maximised in the current technology enhanced climate. AI has had the potential to fully remove the need for decision making such as in the use of our mobile phones or driverless cars[20] however, decision making on patient care still requires human skills such as being a critical thinker and displaying empathy.[52]

AI therefore will have a greater role in supporting the nursing profession in patient care decisions; however, there is also a risk that less experienced nurses may become overly reliant on their outputs.[79] This is equally the case in education whereby an ‘increased dependency of teachers and students on GenAI tools to seek suggestions may lead to the standardization and conformity of responses, weakening the value of independent thought and self-directed inquiry’(p. 37).[54] This is supported in recent research whereby students engaging with chatbot learning reinforced the risk of fostering dependency on such devices.[80] Ahmad et al.[81] is more pointed: ‘Dependency on AI technology in decision-making must be reduced to a certain level to protect human cognition’ (p.11).

A big challenge therefore for all educators is creating a balance for learners; on the one hand, being proficient in using AI and other information technologies in preparation for professional practice, and at the same time avoiding becoming overly reliant on them to reduce potential negative human consequences. Future research must aim to examine how AI can assist, rather than exclude critical thinking and problem-solving skills amongst learners .[80] Being dependable and fully reliant as a decision maker is never more required than when AI technology fails, as happened in Spain recently (May 2025) or as Kormaz [82] conjectures: ‘This dependency might be particularly concerning in situations where AI systems fail or in contexts where they are not available, necessitating a return to traditional diagnostic and treatment methods’ (p. 88). Nurses must always have complete oversight in patient care clinical scenarios especially if ambiguity exists, for example during the increasingly more common cyber security incidents where there is no access to technology. Woodnutt et al.[22] captures the possible future potential of AI and ChatGPT for example describing it ‘as a prompt for nurses who could evaluate its output against guidance, evidence and experience’(p. 85).

5.4Supporting learning: AI simulation, digital tutors and accessibility

Glauberman et al.[69] have concluded that AI brings with it the possibility ‘to supercharge simulation by offering scenarios that are realistic and tailored to students’ individual learning needs’ (p. 303). A crucial game changer with AI simulation is the ability for more realistic two-way interaction with AI-generated patients and scenarios ,[24,83] thereby creating a more valid and authentic experience for students in healthcare education. This is best captured by Hamilton,[84] who discusses the use of interactive avatars in AI simulation: ‘These AI-driven avatars could react dynamically to treatments, providing immediate and realistic feedback. Such advancements will bridge the gap between simulation and real-life clinical encounters, greatly enhancing preparedness for actual patient interactions’ (p. 12). In addition, the interactions will hopefully provide greater diversity and nuance in scenarios, whereby AI simulated patients can take on any persona, age or cultural profile they are prompted to. In addition, the use locally of AI tools developed in other countries will also provide intercultural competence and cross-cultural communication.[75] Challenges remain however in the current state of AI simulation technology with a deficit in particular in the interactive possibilities of virtual avatars, as well as in their limited emotional responses for example.[76]

One of the rapidly appearing outcomes of AI integration to education is the development of personalised AI tutors for students trained specifically for the content of their courses. These infinitely patient AI tutors will act as ‘study buddies’, helping students learn specific course content, providing feedback on their progress, challenging them to learn more, and encouraging them through the difficult parts of the learning process. The advantages of an AI tutor for students include that it can scale almost infinitely providing the same level of support to all students, it never gets tired or sleeps so is always available when the student is ready to learn, provides feedback instantly, doesn’t judge students, and can explain concepts as many times as needed in different ways until the student understands.

There are already examples of these tools being used to great effect with students performing better on assessment, and reporting satisfaction with their experiences.[85] These tools can help to solve the challenge of personalising instruction and learning support at scale, especially for high stakes fields like nursing with a significant body of knowledge that students need to become competent in. Nursing educators have long known that there are elements of the discipline that act as troublesome knowledge[86] or threshold concepts[87] for nursing students ,[88,89] which can create roadblocks to progression in the programme. A potential future use of AI tutors is to support students to focus on those challenging elements of disciplinary knowledge that require significant levels of engagement.[90] Early indications suggest that use of AI tutors can lead to significant learning gains, even over those typically seen in active learning classes .[91]

Another promising area that AI has significant potential to improve student success is in supporting students with disabilities. As the proportion of the population with disabilities continues to increase, the number of students entering universities with recognised and diagnosed disabilities is increasing concomitantly, but the infrastructure needed to support those students within the existing model is challenging to scale and maintain. Often the types of accommodations offered do not address the real needs of the learner, and many students with disabilities do not register officially with accessibility offices, so do not receive the accommodations they need to succeed.[92] Generative AI has the potential to be transformative for learners with disabilities as it allows for the relatively easy customisation of bots to provide exactly the academic support that the learner needs. For example, students who need to develop visual representations of knowledge can now do so in a fraction of the time it takes to develop by hand. Captions or transcripts of lectures can be provided easily and accurately with applications such as Otter.ai, which are also cost-effective. Students with dyslexia or processing disorders can draft language in the way their brain structures knowledge, and translate that to academic formats using AI editing supports. What will be essential in this is that universities recognise the legitimate use of AI tools as assistive technologies and adapt policies to allow for their use.

5.5Ethical considerations

There are a growing number of ethical tensions and debates on AI use emerging in the nursing literature.[21,79] Of particular concern is the protection of patient autonomy, data privacy, algorithmic transparency and bias, and professional accountability.[33] The speed by which AI usage is growing presents challenges for policy makers to respond,[84] however as Lloyd (2024) urges: ‘Nurses, midwives, and healthcare institutions must approach AI integration thoughtfully ensuring that ethical and regulatory concerns are addressed’ (p. 27).[46] The technology poses ethical uncertainties[20] and the hope is that critical debate will minimise future problems that may emerge.[25] In addition, the need for education and training about AI[55] to those involved in teaching and learning on the power of these tools is aptly pointed out by Sallam’s[25] analogy: ‘In the 2004 Formula 1 season, the Ferrari F2004 (the highly successful Formula 1 racing car) broke several Formula 1 records in the hands of Michael Schumacher, one of the most successful Formula 1 drivers of all time. However, in my own hands — as a humble researcher without expertise in Formula 1 driving— the same highly successful car would only break walls and be damaged beyond repair’ (p. 17).

Exploring ethical considerations associated with the introduction of AI in nurse education [93] is a crucial first step. Furthermore, students having the opportunity to critically explore the ethical, legal and social implications[94] associated with the introduction of AI in healthcare education will enhance their learning and understanding. Educators have a responsibility to adhere to codes of ethics in relation to AI usage.[20] Within curriculum development, there is also an educational imperative to ensure that nursing students are taught how to ethically and effectively use AI[95] and as outlined by De Gagne et al.,[93] ‘principles must be woven into the fabric of the curricula’ (p. 1). At a more macro level, students must have a critical understanding of how AI technology is trained, developed and deployed from a legal and ethical perspective.[51] While at a more applied level, for example, educators must reinforce the ethical expectations on using chatbots,[96] particularly given the speed at which they are growing and evolving.

5.6Assessment of student learning

The impact of generative AI on assessment practice is likely to be the most significant near-term disruption to higher education but also reveals the need for longer term fundamental changes in the way that learning is evaluated. In this new era where AI is capable of answering questions and completing tasks at or above human levels, across most knowledge domains, traditional approaches to assessment are no longer fit for purpose. Assessment experts are advocating for a move away from assessment approaches that use a point in time product as a proxy for knowledge or learning (e.g. written assignments, unsupervised quizzes and exams etc.) towards more holistic approaches that focus on process rather than product .[97]

In nursing education, there is a critical imperative to ensure that graduates meet required competencies before they are licenced to practice, which may lead to quality assurance concerns about how competency is best demonstrated and whether current approaches are still able to meet those requirements in the AI era. Such debates have been ongoing for as long as competency-based education has been around, but it is important to note that nursing education already uses many approaches to assessment that are now being recommended as ways to ensure reliability and validity of assessment in light of the use of AI. For example, observed simulations, OSCE’s, continuous assessment, simulation debriefs, and clinical placements all provide opportunities for reliable evaluation of student competencies, even with AI.

What nursing educators may need to consider is what Liu and Bates[98] and the University of Sydney refer to as two-laned assessment. In this model, the first lane consists of secure assessments of learning that focus on specific skills, knowledge or competencies that are critical to the success of a graduate from the program, and which are likely to need to be demonstrated without assistance. Those should be considered key touch points throughout the program and in the case of nursing, may be covered by existing authentic assessment approaches, but there may be some elements, such as medical calculations, information/digital literacy, or ethical practice that are not yet captured in secure assessments. Lane two is all other forms of assessment where it can be assumed that students will not only be using AI assistance, but in which that should be considered normal practice.

5.7Applying a CLA framework to AI teaching and learning in a nursing curriculum

What is clearly emerging from this current review is that nursing regulatory policy is accepting that AI will be part of the future landscape of healthcare while understandably advising caution in how the technology is embraced.[18] There is a sense therefore that with policy in this space, for the most part, acknowledging that AI has a future in nurses’ professional lives, the juxtaposition articulated at the outset of this paper is perhaps narrowing towards an acceptance that AI usage will become more mainstream, and usage of these models will require augmentation of existing competencies. Earlier, we noted that the very helpful AI assessment scale devised by Perkins, et al., [99] offers clear direction of travel to how it can be included in assessment, while Curtis [100] conceives a middle lane, between ‘lane one - no AI’ and ‘Lane 2 - Full AI use’, this further reinforces a more pragmatic opportunity for AI integration in educational contexts. There are many examples as outlined in this paper of frameworks on how to apply AI literacy in teaching and learning practice (e.g. Digital Education Council AI Literacy Framework), however to-date there are few curricular frameworks that provide practical guidance to educators on how AI can be incrementally applied within a middle lane of a teaching and learning landscape.

The CLA is described as a nonlinear pedagogical approach[101] to learning that initially emerged within coaching and specifically skill acquisition, but more recently the approach has also been applied to nursing [102,103] and medical education.[104] A key element of employing AI using CLA is devising in a strategic way, task and environmental constraints around how AI is integrated into domain specific teaching so that the student will respond and make decisions as a result of the affordances that subsequently might emerge from the teaching activity. To achieve maximum learning, the AI task and environmental constraints are introduced on a graduated basis; for example, AI technology accessibility by students during clinical decision making can be anywhere from minimal to maximal and introduced accordingly.

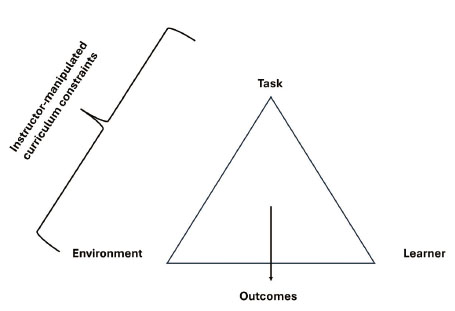

The CLA curricular approach therefore offers a level of structure and guidance for how the middle lane of integrating AI in curricula might work. The middle lane containing what Perkins et al.[99] describe as (1) AI-assisted idea generation and structuring; (2) AI-assisted editing; and (3) AI task completion with human evaluation, have all the potential to be embedded and taught and assessed in nursing curricula. The primary role of the educator therefore is to create a learning environment that firstly includes specific ‘task’ or ‘environmental’ constraints that require the student to respond and take appropriate action (see Figure 1). Creating and molding boundaries in the form of the constraints that are set by the educator including for example: availability of AI technologies; decision making timescales; expert/resource availability to assist with the AI outputs and professional and ethical considerations associated with decision making, present challenges and opportunities for students to learn and adapt continuously in specified learning environments.

Figure 1.

Overview of the CLA applied to teaching (adapted from Renshaw et al.[105])

An example of how AI can be introduced using a CLA curricular approach is outlined in Table 3 using a simulation laboratory that has the purpose of teaching clinical skills to nursing students. Simulation training using generative AI is becoming an effective pedagogical approach in nurse education[106] as well as in many other healthcare contexts. Table 3 provides an example of how CLA is applied using AI to gradually increase the learning challenge for the student in a simulation laboratory. AI can formulate the simulation scenario, adjust and enhance the avatar responses including the scale and challenge of the clinical presentation of the patient in real time thereby making the student adapt to any planned changing constraints. Planned CLA AI inputs within a simulation environment containing defined constraints make the student act in ways that promote learning.

| Task - Clinical scenario | Continuum from minor to maximum complexity with inbuilt constraints | Level of challenge introduced to the learner based on complexity of scenario and in-built constraints will influence how the student responds and takes action. |

| Task - Time limitation | Time constraint is on a continuum based on students’ exposure and experience | AI generated scenario presents a challenge for the student to make expedient decisions if the time is constrained |

| Environmental - AI Avatar patient responses | Response from avatar based on clinical scenario | Multiple opportunities to amend and alter avatar responses that validate clinical scenario and ensure authenticity and will influence how the learner responds to the task constraint |

| Environmental - AI technologies | Learner has access to AI technologies to assist with decision making for example, none, some, or full access. | No AI technologies therefore learner must rely on experiential practice while some or full AI availability require actions such as fact checking to aid in decision making |

| Environmental - Access and support of educator and other digital resources | The learner is constrained by how much support they will have while completing the AI produced simulation. | Where educator input is low, the learner must be able to think critically and make independent decisions. |

| Environmental - Ethical - Data privacy and professional accountability | Considerations associated with professional obligations | The decision-making imperatives require ethical consideration and will assist the learner in making decisions from an ethical context. |

6.Conclusion

Nursing along with many other health care professions need answers to the many questions that the introduction of AI in healthcare is posing and the knowledge vacuum that currently exists reinforces a polarisation of opinion around its acceptance. In the meantime, policies are emerging from nursing regulation and other related bodies acknowledging that the technology is becoming an important aid for nurses in many facets of their professional lives. There is also a growing level of critical discussion and research in relation to how AI can interact with and impact in nursing practice and their outputs will assist to enhance and grow meaningful policies and guidelines in this area.

The inclusion of AI in healthcare has increased the need for digitally savvy healthcare professionals. Nurse educators must provide leadership in this area and develop curricula that embrace AI and other digital technologies so that future nursing professionals will have the necessary AI knowledge, skills and competence to provide care that is underpinned by reliable evidence. CLA has the potential to provide a clear signpost that can aid educationalists to embrace AI in their everyday teaching of domain specific nursing knowledge.

Nursing has always been considered an art and a science, and now AI has the capacity to nurture and inform both pillars further by providing immediate and real-time inputs on patient care. AI has the potential to transform how health care is delivered by nurses now and in the future, however its role will be to assist and support decision making not to become the final arbitrator. It is incumbent on nurse education and nursing regulatory bodies to develop robust curricula and guidance to ensure that AI is introduced with clear ethical hardwiring in place so that a balance remains between its positive developments, such as automation and predictive analytics, and the primacy of patient safety. Finally, although this paper primarily discusses nurse education, we encourage educators in allied health and social care fields to explore how CLA could be applied to effectively incorporate AI within their respective curricula.

Acknowledgements

The collaboration that led to this paper was made possible through connections developed by the University of Windsor’s Office of Open Learning Visiting Fellows Programme, and the N-TUTORR Programme at Munster Technological University (Funded by the European Union).

Authors contributions

Review methodology TF; data collection: WE, TF, NB; writing - original draft: WE; writing - review and editing: WE, TF, NB. All authors contributed substantive material to the final paper. All authors have read and approved the submitted version of the manuscript.

Funding

The APC for this article was funded by the University of Windsor through NB’s Director’s Research Grant. No other funding sources were used in the completion of this work. The authors declare that they have no known competing financial interests or personal relationships that could have appeared to influence the work reported in this paper.

Conflicts of Interest Disclosure

The authors declare that they have no known competing financial interests or personal relationships that could have appeared to influence the work reported in this paper.

Informed consent

Obtained.

Ethics approval

The Publication Ethics Committee of the Association for Health Sciences and Education. The journal’s policies adhere to the Core Practices established by the Committee on Publication Ethics (COPE).

Provenance and peer review

Not commissioned; externally double-blind peer reviewed.

Data availability statement

The data that support the findings of this study are available on request from the corresponding author. The data are not publicly available due to privacy or ethical restrictions.

Data sharing statement

No additional data are available.

References

- Canadian Association of Schools of Nursing. Embracing ChatGPT in Nursing Education and Practice. 2024.

- The World Health Organisation (WHO). Harnessing Artificial Intelligence for Health. AI & Digital: Harnessing Artificial Intelligence for Health. 2024. https://www.who.int/teams/digital-health-and-innovation/harnessing-artificial-intelligence-for-health

- Aveyard H, Bradbury-Jones C. An analysis of current practices in undertaking literature reviews in nursing: findings from a focused mapping review and synthesis. BMC Medical Research Methodology. 2019;19:105. PMID:31096917 doi:10.1186/s12874-019-0751-7

- Boland A, Cherry M, Dickson R. Doing a systematic review: A student’s guide. London; SAGE Publishing. 2017.

- Grant M, Booth A. A typology of reviews: an analysis of 14 review types and associated methodologies. Health Info Libr J. 2009;26(2):91-108. PMID:19490148 doi:10.1111/j.1471-1842.2009.00848.x

- Sukhera J. Narrative Reviews: Flexible, Rigorous, and Practical. Journal of Graduate Medical Education. 2022;14(4):414-7. PMID:35991099 doi:10.4300/JGME-D-22-00480.1

- Snyder H. Literature review as a research methodology: An overview and guidelines. Journal of Business Research. 2019;104:333-9. doi:10.1016/j.jbusres.2019.07.039

- Booth A, Sutton A, Papaioannou D. Systematic approaches to a successful literature review. London; SAGE Publications. 2016.

- Snilstveit B, Oliver S, Vojtkova M. Narrative approaches to systematic review and synthesis of evidence for international development policy and practice. Journal of Development Effectiveness. 2012;4(3):409-29. doi:10.1080/19439342.2012.710641

- Green B, Johnson C, Adams A. Writing narrative literature reviews for peer-reviewed journals: secrets of the trade. Journal of Chiropractic Medicine. 2006;5(3):101-17. PMID:19674681 doi:10.1016/S0899-3467(07)60142-6

- Asilomar AI Principles. Future of Life Institute. 2017. https://futureoflife.org/open-letter/ai-principles/

- Dilhac M, Abrassart C, Bengio Y. Montréal Declaration on Responsible AI. The Montreal Declaration Responsible AI. 2017. https://montrealdeclaration-responsibleai.com/

- High-Level Expert Group on Artificial Intelligence. Independent High Level Expert Group on Artificial Intelligence: Ethics Guidelines for Trustworthy AI. Brussels; The European Commission. 2019.

- The Australian College of Nursing. Artificial Intelligence. Australian College of Nursing. 2024. https://www.acn.edu.au/advocacy-policy/position-statement-artificial-intelligence

- National Academy of Medicine. An Artificial Intelligence Code of Conduct for Health and Medicine: Essential Guidance for Aligned Action. Washington, DC; The National Academies Press. 2025. doi:10.17226/29087

- The American Nurses Association Centre for Ethics and Human Rights. The Ethical Use of Artificial Intelligence in Nursing Practice. The American Nurses Association. 2022.

- Nursing and Midwifery Board of Ireland. Digital Health Competency Standards and Requirements for Undergraduate Nursing and Midwifery Education Programmes (First Edition). 2023.

- Nursing and Midwifery Board of Ireland. NMBI - Code of Professional Conduct and Ethics. 2025.

- The Royal College of Nursing. Using Artificial Intelligence (AI) for Career Development. The Royal College of Nursing|RCN Career Resources. 2024. https://www.rcn.org.uk/Professional-Development/Your-career/Artificial-intelligence

- Le Lagadec D, Jackson D, Cleary M. Artificial intelligence in nursing education: Prospects and pitfalls. Journal of Advanced Nursing. 2024;80(10):3883-5. PMID:38864369 doi:10.1111/jan.16276

- Abdulai A, Hung L. Will ChatGPT undermine ethical values in nursing education, research, and practice?. Nursing Inquiry. 2023;30(3):e12556. PMID:37101311 doi:10.1111/nin.12556

- Woodnutt S, Allen C, Snowden J. Could artificial intelligence write mental health nursing care plans?. Journal of Psychiatric and Mental Health Nursing. 2024;31(1):79-86. PMID:37538021 doi:10.1111/jpm.12965

- Amin S, El-Gazar H, Zoromba M. Sentiment of Nurses Towards Artificial Intelligence and Resistance to Change in Healthcare Organisations: A Mixed-Method Study. Journal of Advanced Nursing. 2025;81(4):2087-98. PMID:39235193 doi:10.1111/jan.16435

- O’Connor S, Permana A, Neville S. Artificial intelligence in nursing education 2: opportunities and threats. Nursing Times. 2023.

- Sallam M. ChatGPT Utility in Healthcare Education, Research, and Practice: Systematic Review on the Promising Perspectives and Valid Concerns. Healthcare. 2023;11(6):887. PMID:36981544 doi:10.3390/healthcare11060887

- O’Connor S, Booth R. Algorithmic bias in health care: Opportunities for nurses to improve equality in the age of artificial intelligence. Nursing Outlook. 2022;70(6):780-2. PMID:36396503 doi:10.1016/j.outlook.2022.09.003

- Collins G. Making the black box more transparent: improving the reporting of artificial intelligence studies in healthcare. BMJ. 2024;385:q832. PMID:38626954 doi:10.1136/bmj.q832

- Pavlidis G. Unlocking the black box: analysing the EU artificial intelligence act’s framework for explainability in AI. Law, Innovation and Technology. 2024;16(1):293-308. doi:10.1080/17579961.2024.2313795

- Ali S, Akhlaq F, Imran A. The enlightening role of explainable artificial intelligence in medical & healthcare domains: A systematic literature review. Computers in Biology and Medicine. 2023;166:107555. PMID:37806061 doi:10.1016/j.compbiomed.2023.107555

- Alderden J, Johnny J, Brooks K. Explainable Artificial Intelligence for Early Prediction of Pressure Injury Risk. American Journal of Critical Care. 2024;33(5):373-81. PMID:39217110 doi:10.4037/ajcc2024856

- Wynn M. The ethics of non-explainable artificial intelligence: an overview for clinical nurses. Br J Nurs. 2025;34(5):294-7. PMID:40063542 doi:10.12968/bjon.2024.0394

- Byrne A, Mulvogue J, Adhikari S. Discriminative and exploitive stereotypes: Artificial intelligence generated images of aged care nurses and the impacts on recruitment and retention. Nursing Inquiry. 2024;31(3):e12651. PMID:38940314 doi:10.1111/nin.12651

- Mohammed S, Osman Y, Ibrahim A. Ethical and regulatory considerations in the use of AI and machine learning in nursing: A systematic review. International Nursing Review. 2025;72(1):e70010. PMID:40045476 doi:10.1111/inr.70010

- Cleary M, Kornhaber R, Le Lagadec D. Artificial Intelligence in Mental Health Research: Prospects and Pitfalls. Issues in Mental Health Nursing. 2024;45(10):1123-7. PMID:38683972 doi:10.1080/01612840.2024.2341038

- Rony M, Numan S, Johra Ft. Perceptions and attitudes of nurse practitioners toward artificial intelligence adoption in health care. Health Science Reports. 2024;7(8):e70006. PMID:39175600 doi:10.1002/hsr2.70006

- Nilsen P. Artificial intelligence in nursing: From speculation to science. Worldviews on Evidence Based Science. 2024;21(1):4-5. PMID:38240405 doi:10.1111/wvn.12706

- Lora L, Foran P. Nurses’ perceptions of artificial intelligence (AI) integration into practice: An integrative review. Journal of Perioperative Nursing. 2024;37(3):22-8. doi:10.26550/2209-1092.1366

- World Health Organization. Ethics and Governance of Artificial Intelligence for Health: WHO Guidance. Geneva; World Health Organization. 2021.

- Baker Stein M, Jones-Schenk J. The Future of Nursing: Navigating the AI Revolution Through Education and Training. The Journal of Continuing Education in Nursing. 2024;55(3):108-9. PMID:38422991 doi:10.3928/00220124-20240221-03

- Kovach-Clark S, Nursing & Midwifery Council. A pro-innovation approach to AI regulation. 2023.

- O’Connor S. Open artificial intelligence platforms in nursing education: Tools for academic progress or abuse?. Nurse Education in Practice. 2023;66:103537. PMID:36549229 doi:10.1016/j.nepr.2022.103537

- Vrontis D, Christofi M, Pereira V. Artificial intelligence, robotics, advanced technologies and human resource management: a systematic review. The International Journal of Human Resource Management. 2022;33(6):1237-66. doi:10.1080/09585192.2020.1871398

- Lopez V. The art of nursing in the fourth industrial revolution: Lessons learned. Asia-Pacific Journal of Oncology Nursing. 2024;11(7):100498. PMID:38988826 doi:10.1016/j.apjon.2024.100498

- Archibald M, Clark A. ChatGTP: What is it and how can nursing and health science education use it?. Journal of Advanced Nursing. 2023;79(10):3648-51. PMID:36942780 doi:10.1111/jan.15643

- Fabiano N, Gupta A, Bhambra N. How to optimize the systematic review process using AI tools. JCPP Advances. 2024;4(2):e12234. PMID:38827982 doi:10.1002/jcv2.12234

- Lloyd J. Revolutionising healthcare: The impact of Artificial Intelligence in nursing and midwifery. Australian Nursing and Midwifery Journal. 2024;28(3):7.

- McNeil B. Nursing Informatics: “Furiously-Paced” Artificial Intelligence (AI) Chatbot Technology – Early Findings and Cautions for Nursing. RN Idaho. 2024;46:3-4.

- Mohanasundari S, Kalpana M, Madhusudhan U. Can Artificial Intelligence Replace the Unique Nursing Role?. Cureus. 2023;15(12):e51150. PMID:38283483 doi:10.7759/cureus.51150

- Farrelly T, Baker N. Generative Artificial Intelligence: Implications and Considerations for Higher Education Practice. Education Sciences. 2023;13(11):1109. doi:10.3390/educsci13111109

- Genuino M. The Double-Edged Sword of Artificial Intelligence (AI): The Impact of AI on the Development of Critical Thinking Skills among Nursing Students. New Jersey Nurse & Institute for Nursing Newsletter. 2023.

- MacLaren I, O’Brien G, Casey E. Generative Artificial Intelligence: Guidelines for Educators. Ireland; National Academic Integrity Network, Quality & Qualifications Ireland (QQI). 2023.

- Connor Nyberg C, Morris E. Letter to the editor: “Revolutionizing clinical education: opportunities and challenges of AI integration.”. European Journal of Physiotherapy. 2023;25(3):127-8. doi:10.1080/21679169.2023.2198571

- Tertiary Education Quality and Standards Agency (TEQSA). Gen AI strategies for Australian higher education: Emerging practice. Canberra; TEQSA, Australian Government. 2024.

- Sabzalieva E, Valentini A. ChatGPT and artificial intelligence in higher education: Quick start guide. UNESCO Digital Library. 2023. https://unesdoc.unesco.org/ark:/48223/pf0000385146

- O’Connor S, Leonowicz E, Allen B. Artificial intelligence in nursing education 1: strengths and weaknesses. Nursing Times. 2023;119(101):1-4.

- Yasin Y, Al-Hamad A, Metersky K. Incorporation of artificial intelligence into nursing research: A scoping review. International Nursing Review. 2025;72(1):e13013. PMID:38967044 doi:10.1111/inr.13013

- Palaniappan K, Lin E, Vogel S. Global Regulatory Frameworks for the Use of Artificial Intelligence (AI) in the Healthcare Services Sector. Healthcare. 2024;12(5):562. PMID:38470673 doi:10.3390/healthcare12050562

- Kahambing J. Artificial intelligence as ‘vicarious curation’ between public health and inequality. Journal of Public Health. 2024;46(4):e755. PMID:38836567 doi:10.1093/pubmed/fdae089

- Aoun J. Robot-Proof: Higher education in the age of artificial intelligence. Cambridge, Massachusetts; The MIT Press. 2024.

- Hwang H, Zhu L, Cui Q. Development and Validation of a Digital Literacy Scale in the Artificial Intelligence Era for College Students. KSII Transactions on Internet and Information Systems (TIIS). 2023;17(8):2241-58. doi:10.3837/tiis.2023.08.016

- Annapureddy R, Fornaroli A, Gatica-Perez D. Generative AI Literacy: Twelve Defining Competencies. Digit Gov: Res Pract. 2024;6(1):1-21. doi:10.1145/3685680

- Bozkurt A. Why Generative AI Literacy, Why Now and Why it Matters in the Educational Landscape? Kings, Queens and GenAI Dragons. Open Praxis. 2024;16(3):283-90. doi:10.55982/openpraxis.16.3.739

- Digital Education Council. DEC AI Literacy Framework. The Digital Education Council. 2025.

- Hillier M. A proposed AI literacy framework. TECHE. 2023. https://teche.mq.edu.au/2023/03/a-proposed-ai-literacy-framework/

- Yi Y. Establishing the concept of AI literacy. Jahr – European Journal of Bioethics. 2021;12(2):353-68. doi:10.21860/j.12.2.8

- Bosun-Arije S, Ekpenyong M. Using the theory of symbolic interactionism to inform assessment processes in nurse education. Nurse Education in Practice. 2023;72:103781. PMID:37739884 doi:10.1016/j.nepr.2023.103781

- Gunawan J, Aungsuroch Y, Montayre J. ChatGPT integration within nursing education and its implications for nursing students: A systematic review and text network analysis. Nurse Education Today. 2024;141:106323. PMID:39068726 doi:10.1016/j.nedt.2024.106323

- Castonguay A, Farthing P, Davies S. Revolutionizing nursing education through AI integration: A reflection on the disruptive impact of ChatGPT. Nurse Education Today. 2023;129:105916. PMID:37515957 doi:10.1016/j.nedt.2023.105916

- Glauberman G, Ito-Fujita A, Katz S. Artificial Intelligence in Nursing Education: Opportunities and Challenges. Hawaii J Health Soc Welf. 2023;82(12):302-5.

- Deane W. The use of ChatGPT in nursing education: A novel approach to developing case studies. Journal of Nursing Education and Practice. 2024;14(12):26-31. doi:10.5430/jnep.v14n12p26

- Bellini-Leite S. Dual Process Theory for Large Language Models: An overview of using Psychology to address hallucination and reliability issues. Adaptive Behavior. 2024;32(4):329-43. doi:10.1177/10597123231206604

- Crompton H, Burke D. Artificial intelligence in higher education: the state of the field. International Journal of Educational Technology in Higher Education. 2023;20:22. doi:10.1186/s41239-023-00392-8

- Akutay S, Yüceler Kaçmaz H, Kahraman H. The effect of artificial intelligence supported case analysis on nursing students’ case management performance and satisfaction: A randomized controlled trial. Nurse Education in Practice. 2024;80:104142. PMID:39299058 doi:10.1016/j.nepr.2024.104142

- Yahagi M, Hiruta R, Miyauchi C. Comparison of Conventional Anesthesia Nurse Education and an Artificial Intelligence Chatbot (ChatGPT) Intervention on Preoperative Anxiety: A Randomized Controlled Trial. Journal of PeriAnesthesia Nursing. 2024;39(5):767-71. PMID:38520470 doi:10.1016/j.jopan.2023.12.005

- Lifshits I, Rosenberg D. Artificial intelligence in nursing education: A scoping review. Nurse Education in Practice. 2024;80:104148. PMID:39405792 doi:10.1016/j.nepr.2024.104148

- Ma J, Wen J, Qiu Y. The role of artificial intelligence in shaping nursing education: A comprehensive systematic review. Nurse Education in Practice. 2025;84:104345. PMID:40168750 doi:10.1016/j.nepr.2025.104345

- Tornero-Costa R, Martinez-Millana A, Azzopardi-Muscat N. Methodological and Quality Flaws in the Use of Artificial Intelligence in Mental Health Research: Systematic Review. JMIR Mental Health. 2023;10:e42045. PMID:36729567 doi:10.2196/42045

- Allen L, Kendeou P. ED-AI Lit: An Interdisciplinary Framework for AI Literacy in Education. Policy Insights from the Behavioral and Brain Sciences. 2024;11(1):3-10. doi:10.1177/23727322231220339

- Watson A. Ethical considerations for artificial intelligence use in nursing informatics. Nurs Ethics. 2024;31(6):1031-40. PMID:38318798 doi:10.1177/09697330241230515

- Xie Z, Wu X, Xie Y. Can interaction with generative artificial intelligence enhance learning autonomy? A longitudinal study from comparative perspectives of virtual companionship and knowledge acquisition preferences. Journal of Computer Assisted Learning. 2024;40(5):2369-84. doi:10.1111/jcal.13032

- Ahmad S, Han H, Alam M. Impact of artificial intelligence on human loss in decision making, laziness and safety in education. Humanit Soc Sci Commun. 2023;10:1-14. PMID:37325188 doi:10.1057/s41599-023-01787-8

- Korkmaz S. Artificial Intelligence in Healthcare: A Revolutionary Ally or an Ethical Dilemma. Balkan Med J. 2024;41:87-8. PMID:38269851 doi:10.4274/balkanmedj.galenos.2024.2024-250124

- Zidoun Y, Mardi A. Artificial Intelligence (AI)-Based simulators versus simulated patients in undergraduate programs: A protocol for a randomized controlled trial. BMC Medical Education. 2024;24:1260. PMID:39501219 doi:10.1186/s12909-024-06236-x

- Hamilton A. Artificial Intelligence and Healthcare Simulation: The Shifting Landscape of Medical Education. Cureus. 2024;16(5):e59747. doi:10.7759/cureus.59747

- Ward B, Bhati D, Neha F. Analyzing the Impact of AI Tools on Student Study Habits and Academic Performance. 2024. doi:10.48550/arXiv.2412.02166

- Perkins D. The many faces of constructivism. Educational Leadership. 1999;57(3):6-11.